Introduction

Modern ISA extensions and hardware accelerators created the demand for raising program idioms to acceleration instructions or optimized library calls. Although recent works have proposed different ways of raising code abstraction, they rely on specialized languages, compiler recompilation, or in-depth dialect knowledge. With this in mind, we developed Source-based Matching and Rewriting (SMR), an MLIR based user-oriented tool for pattern matching and rewriting that relies on source code to simplify pattern and replacement specifications. The goal of this RFC is to summarize SMR’s design, implementation, and limitations, and to get community feedback.

- More details in “Source Matching and Rewriting for MLIR Using String-Based Automata”.

- The source code can be found in this repository.

PAT

To define rewriting operations, SMR uses a Pattern Template file (PAT) in which rewrites are specified using a macro definition that pairs two snippets of source code together. The structure of a PAT rewrite specification can be found below.

1 lang {

2 fun (type1 arg1, type2 arg2,...) {

3 match

4 }

5 } = {

6 fun (type1 arg1, type2 arg2,...) {

7 replacement

8 }

9 }

Listing 1 - Replacing matchA with rewriteB both written in lang.

In the first section, lang (line 1) specifies the source language used in the rewrite, followed by the declaration of the pattern’s wrapper function declaring its arguments and their corresponding types (line 2). The pattern itself is described in the body (match) of the wrapper function (line 3). The second section (lines 5–9) contains the replacement code that will rewrite the idiom when a match is found. The wrapper function declaration (line 6) matches the arguments of the idiom to the variables used by the matched input fragment. In the case of an idiom match, the matched input fragment is replaced by the code in replacement. A PAT file can have multiple specifications like the one above.

The wrapper functions in the pattern and replacement serve two purposes. First, they make the codes valid, as the front end of the language (lang) used must be able to compile them. Second, they act as an interface between the input, pattern, and replacement codes which facilitates the rewrite process. In the matching process, SMR considers the wrapper function arguments’ order and types, as well as the function’s body, ignoring the function’s and arguments’ names.

Through PAT, it is possible for users to easily specify rewrites. For example, the PAT below replaces a naive dot product with a BLAS call:

C {

void dot(int N, float *x, int ix, float *y, int iy, float *out) {

for (int i = 0; i < N; i++)

*out += x[i*ix] * y[i*iy];

}

} = {

#include <cblas.h>

void dot(int M, float *p, int ip, float *q, int iq, float *out) {

*out += cblas_sdot(M, p, ip, q, iq);

}

}

Listing 2 - Raising dot-product by replacing it with a BLAS call using SMR.

Each rewrite specification in the PAT file must be lowered to MLIR to be used by SMR. For the PAT file to be valid, every source language used must have a supported frontend to it to MLIR. Once lowered, the same structure remains, but every pattern and replacement is represented as MLIR.

Compilation

SMR has two main compilation flows. The first occurs when compiling and serializing a PAT file into a Pattern Finite Automaton (PFA) file. The second happens when matching an input file into an MLIR file against the PFA. Both flows are shown in the figures below. There’s technically a third one which is when matching an input against a raw PAT file, but it is not shown here as it is not the recommended way of using SMR. In the following sections, we will describe each flow in detail using Fortran source files as an example.

Fig. 1 - Serialization Compilation Flow & Fig. 2 - Matching Compilation Flow

When serializing, the PAT Builder separates① each pattern/replacement code available in patterns.pat into pairs of Fortran files: idiom.f90 and replacement.f90②. These files are compiled using Flang, generating MLIR (i.e. FIR) codes③. Pattern and replacement FIR codes are then used by the SMR algorithm to build a PFA automaton⑤ that is stored into patterns.pfa⑦. The .pfa file serves as a library of patterns/replacements that can be used many times by SMR to detect idioms on arbitrary input programs.

When matching, it takes a input.90⑧ source code file that is the input to be optimized, alongside a PFA file patterns.pfa⑩. The PFA file is used by the SMR algorithm to recreate its automata: the Control Dependency Graph (CDG) and the Data Dependency Graph (DDG). The input.mlir file is fed to the CDG to find a section of the input code that matches the control flow of some patterns. These sections are then passed to the DDG to verify if the dataflow also matches. If so, the input code is rewritten with the proper replacement. Regular MLIR compilation resumes with the lowering to LLVM IR after that.

Note that in both flows the source code must go through an MLIR front end that will lower it to generic MLIR. This allows SMR to run the matching and rewriting algorithm using MLIR’s generic representation as a common ground.

Algorithm

SMR’s matching algorithm is divided into two phases: Control Dependency Graph (CDG) matching and Data Dependency Graph (DDG) matching. The CDG matching is responsible for finding sections of the input program that match the control flow of some pattern. Its lightweight representation can be reduced to a tree, making it fast to both build and match against. This allows SMR to quickly filter the possible match candidates among huge input codes greatly reducing the search space. The second stage, DDG matching, is responsible for verifying if the data flow of the input program also matches the data flow of the pattern. It requires a heavier representation with more information, but it is only applied to the sections of the input program that passed the CDG matching. Both graphs are represented as automata to compress them, similarly as proposed in Aho et al. Automata can also be easily serialized and saved for reuse, thus removing the need to rebuild the patterns’ CDG and DDG automata.

A bit of nomenclature:

- Region Defining Operation (RDO) is an MLIR operation that defines at least one region.

- Control-string is a string used to build the CDG that represents the control flow of a program.

- Data-string is a string used to build the DDG that represents one of the data paths of a program.

CDG Matching

The CDG matching stage takes advantage of MLIR’s hierarchical representation of operations, regions, and basic blocks to quickly rule out sections of the input code that can’t match any pattern. Consider the Fortran code and its corresponding CDG control-string representation below. There, curly brackets delimit regions, SEQ is non-empty sequences MLIR operations, and strings (e.g. "fir.if") are RDOs.

subroutine sum(test, val) | "fir.if" {

integer :: val, test | SEQ

IF (test == 1) THEN | } {

val = 1 | SEQ

ELSE | "fir.if" {

val = val - 1 | SEQ

IF (val == 1) THEN | } {

test = 0 | SEQ

END IF | }

END IF | SEQ

end subroutine | }

Listing 3 - Fortran idiom code and its respective control-string.

The essence of the CDG graph is to represent the control flow of the input program as a single string. In the example above, we could represent the control flow with the following control-string:

"fir.if" { SEQ } { SEQ "fir.if" { SEQ } { SEQ } SEQ }

Multiple patterns generate multiple CDG strings that can be encoded into a single automaton. Once an input is given, we generate the CDG string for this input and seek substrings within it that match the control-string of some pattern. If a match is found, we retrieve the operation that generated the matched substring from the input and pass it to the DDG matching.

It’s important to reiterate that the CDG stage does not tell us that the input code is a match, but it is a fast way to know which sections of the input might be a match.

DDG Matching

After CDG isolates the points of interest in the input code, we proceed to the Data Dependency Graph (DDG) matching stage. Here, a more fine-grained match finds if the data flow of the input code snippets filtered by the CDG matches against the data flow of some pattern. If there’s a match, we can then proceed to the rewriting stage.

Input and patterns’ DDGs are built from their generic MLIR representations using a combination of ud-chains and regions. Also, relevant particularities of a dialect are encoded in the strings through SMR’s dialect-wise integration, allowing important attributes to be matched, and irrelevant ones, ignored. The example configuration below shows the FIR configuration for the fir.cmpf and fir.if operations.

fir:

# The predicate attribute defines the comparison being made in the operations below.

cmpf:

must-match-attr: predicate

if:

must-match-attr: predicate

Listing 4 - SMR’s dialect-wise configuration example.

Building the DDG

One of the advantages of operating SMR on top of a generic MLIR representation is that it can be interpreted as a Rooted Directed Acyclic Graph (RDAG) by using the MLIR regions and ud-chains. In other words, there is no need to build the graph, only to interpret it as an RDAG and encode it as a set of strings.

1 "func"() ( {

2 ^bb0(%arg0: !fir.ref<i32>, %arg1: !fir.ref<i32>):

3 %c1_i32 = "std.constant"() {value = 1 : i32} : () -> i32

4 %c0_i32 = "std.constant"() {value = 0 : i32} : () -> i32

5 %0 = "fir.load"(%arg0) : (!fir.ref<i32>) -> i32

6 %1 = "std.cmpi"(%0, %c1_i32) {predicate = 0 : i64} : (i32, i32) -> i1

7 "fir.if"(%1) ( {

8 "fir.store"(%c1_i32, %arg1) : (i32, !fir.ref<i32>) -> ()

9 "fir.result"() : () -> ()

10 }, {

11 %2 = "fir.load"(%arg1) : (!fir.ref<i32>) -> i32

12 %3 = "std.subi"(%2, %c1_i32) : (i32, i32) -> i32

13 "fir.store"(%3, %arg1) : (i32, !fir.ref<i32>) -> ()

14 %4 = "fir.load"(%arg1) : (!fir.ref<i32>) -> i32

15 %5 = "std.cmpi"(%4, %c1_i32) {predicate = 0 : i64} : (i32, i32) -> i1

16 "fir.if"(%5) ( {

17 "fir.store"(%c0_i32, %arg0) : (i32, !fir.ref<i32>) -> ()

18 "fir.result"() : () -> ()

19 }, {

20 "fir.result"() : () -> ()

21 }) : (i1) -> ()

22 "fir.result"() : () -> ()

23 }) : (i1) -> ()

24 "std.return"() : () -> ()

25 }) {sym_name = "_QPsum", type = (!fir.ref<i32>, !fir.ref<i32>) -> ()} : () -> ()

Listing 5 - MLIR FIR code for the idiom in Listing 3.

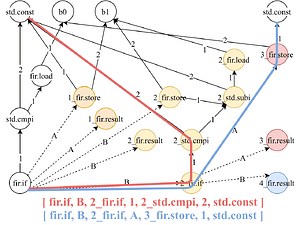

Fig. 3 - MLIR use-def chain graph & Fig. 4 - DDG graph (RDAG) and stringification

Each MLIR operation in the DDG is a node labeled by its name and relevant attributes. They are connected by MLIR’s ud-chains, used as directed edges (use->def). Since there are operations in which the order of arguments matters (p.e. subtraction), outgoing edges from an operation are numbered according to the input operand index. However, the UD-chain graph (Fig. 3) still lacks control-flow completeness and a root.

Adding Control-flow info: The MLIR control regions aren’t depicted in (Fig. 3), resulting in an incomplete control structure of the code in Listing 5. A potential fix is to color nodes based on their region, denoted by an integer ID prefix to the node label. For instance, a node in region 2 (yellow) like std_cmpi would be labeled 2_std.cmpi. This allows us to group MLIR operations by regions, preserving control-flow information, as exemplified in (Fig. 4).

Rooting the graph: Numerous nodes lack incident edges in (Fig. 3), making the graph disjoint and rootless. These derive from operations with no return value (p.e. fir.store). To root the graph, we introduce “Region Edges”. These edges link a potential root to its parent RDO. Said edges are alphabetically labeled since the order of the regions in an RDO is relevant. For example, fir.if->A->fir.store represents a region edge. Combining these region edges with ud-chains yields a rooted graph. Since the wrapper function isn’t part of the match, the outer-most fir.if operation is not connected to its parent operation. Assuming no sequential RDOs in the wrapper function’s body, there’ll be a single root RDO after linking. In this case, this root RDO is the fir.if.

Stringification: Each code snippet is represented by an RDAG, and each RDAG can be converted into a set of strings. The stringification process consists of listing all the possible paths from the root to every leaf so that each path is composed by the concatenation of the identifiers labeled at the nodes and edges. The stringification process is exemplified in (Fig. 4) by the red and blue paths. The blue data-string represents the data-dependency execution path starting at the root fir.if operation (line 7), going to the fir.if operation (line 16) in the inner (yellow) region, and proceeding to the std.cmpi operation (line 15) that uses the constant std.const %c1_i32 (line 3). Conversely, the red data-string begins at the same root fir.if (line 7), continues to the inner region fir.if (line 16), but then diverges through region edge A to the 3_fir.store operation (line 17) of the pink region, which uses std.const %c0_i32 (line 4). Performing this process for every path in an idiom pattern DDG yields a set of data-strings representing the pattern.

Rewriting

Once an input snippet DDG matches a pattern, the rewrite specification can be applied. First, SMR eliminates operations reachable from the root operation matched in the input code. Then, said root operation is replaced with a call to the replacement function, and the function’s definition is injected into the input’s module. Since the removed code might use MLIR variables defined in parent regions, these variables are passed as arguments to the rewritten function. Global variables are accessed via special operations and attributes, rather than arguments.

Limitations

MLIR Structure Restrictions on Pattern Idioms: Currently SMR only handles idioms that are reducible CFGs, with additional constraints set for faster design: idiom patterns cannot have sequential RDOs in the wrapper function’s body; regions must contain exactly one basic block; operations must define at most one result operand. Some of these constraints may be removed in future versions.

Sensibility to Frontends and Dialects: Using source code to describe rewrites has the downside of depending on frontends that lower these codes to MLIR and their variations over time. Yet, by relying on MLIR’s common structure, SMR updates can be made with relative ease. Still, pattern and replacement must both be valid for the front-end used to compile the PAT file to MLIR, and idioms are type-specific due to the source code’s enforcement of types for every variable.

Limited Pattern Generality: MLIR allows SMR to easily adapt to multiple dialects and languages, but it has trade-offs. The high-level configurable representation makes it difficult to ensure correctness without using a rather strict DDG model, causing patterns to break due to small variations in the input code.

Experiments

Our experimental evaluations primarily aimed to validate SMR’s versatility as a rewriting tool. These tests conducted with earlier versions of MLIR, FIR, and SMR, assessed correctness, efficiency, flexibility, and scalability. All experiments were performed using a dual Intel Xeon Silver 4208 CPU @ 2.10GHz with 16 cores total and 191 GiB of RAM running Ubuntu 20.04. This section is a brief summary, for more information, please refer to the paper.

We replaced Fortran Polybench kernels with corresponding BLAS calls using the PAT language, leading to speed-ups of 3x to 518x. Some kernels couldn’t be replaced (or were only partially replaced) due to SMR’s limitations. Compilation overhead was between 5-20% across kernels.

For input scalability, we identified nine patterns in six large C programs using the CIL front-end, resulting in a linear increase in time per number of input lines. Pattern scalability was also tested by fixing an input size and varying the number of patterns. When ranging from 2000 to 10000 GEMM pattern variations, there was little variation in the matching runtime.

SMR was also compared qualitatively with similar tools, showing ease of use and moderate overhead but limitations in pattern generality. Possible ways to address this are to enhance the DDG model to be more generic and to generate pattern variations.

Other possible uses for SMR rewrites, such as approximate computing and hardware acceleration rewriting, were also briefly explored.

Notes

- The results presented here were measured with older versions of SMR, MLIR, and FIR.

- SMR support for CIL was dropped since there has been no effort to maintain it.

- We intend to include ports to CIR/Polygeist in future works.